Getty Photographs

Getty PhotographsInstagram is overhauling the best way it really works for youngsters, promising extra “built-in protections” for younger individuals and added controls and reassurance for folks.

The brand new “Teen Accounts” are being launched from Tuesday within the UK, US, Canada and Australia.

Social media corporations are below stress worldwide to make their platforms safer, with considerations that not sufficient is being achieved to protect younger individuals from dangerous content material.

The NSPCC known as the announcement a “step in the proper route” however stated Instagram’s proprietor, Meta, appeared to “placing the emphasis on youngsters and oldsters needing to maintain themselves protected.”

Rani Govender, the NSPCC’s on-line youngster security coverage supervisor, stated Meta and different social media corporations wanted to take extra motion themselves.

“This have to be backed up by proactive measures that forestall dangerous content material and sexual abuse from proliferating Instagram within the first place, so all youngsters benefit from complete protections on the merchandise they use,” she stated.

Meta describes the adjustments as a “new expertise for teenagers, guided by dad and mom”, and says they’ll “higher help dad and mom, and provides them peace of thoughts that their teenagers are protected with the proper protections in place.”

Nonetheless, media regulator Ofcom raised considerations in April over parents’ willingness to intervene to keep their children safe online.

In a chat final week, senior Meta government Sir Nick Clegg stated: “One of many issues we do discover… is that even after we construct these controls, dad and mom don’t use them.”

Ian Russell, whose daughter Molly seen content material about self-harm and suicide on Instagram earlier than taking her life aged 14, informed the BBC it was essential to attend and see how the brand new coverage was carried out.

“Whether or not it really works or not we’ll solely discover out when the measures come into place,” he stated.

“Meta is excellent at drumming up PR and making these massive bulletins, however what additionally they need to be good at is being clear and sharing how properly their measures are working.”

How will it work?

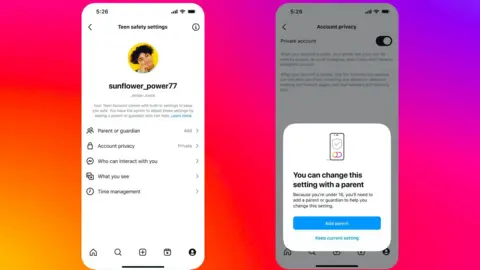

Teen accounts will principally change the best way Instagram works for customers between the ages of 13 and 15, with quite a lot of settings turned on by default.

These embody strict controls on delicate content material to forestall suggestions of probably dangerous materials, and muted notifications in a single day.

Accounts may even be set to personal moderately than public – which means youngsters must actively settle for new followers and their content material can’t be seen by individuals who do not comply with them.

Altering these default settings can solely be achieved by including a dad or mum or guardian to the account.

Instagram

InstagramDad and mom who select to oversee their kid’s account will be capable of see who they message and the matters they’ve stated they’re considering – although they won’t be able to view the content material of messages.

Instagram says it should start transferring tens of millions of current teen customers into the brand new expertise inside 60 days of notifying them of the adjustments.

Age identification

The system will primarily depend on customers being sincere about their ages – although Instagram already has instruments that search to confirm a consumer’s age if there are suspicions they don’t seem to be telling the reality.

From January, within the US, it should additionally begin utilizing synthetic intelligence (AI) instruments to try to proactively detect teenagers utilizing grownup accounts, to place them again right into a teen account.

The UK’s On-line Security Act, handed earlier this 12 months, requires on-line platforms to take motion to maintain youngsters protected, or face large fines.

Ofcom warned social media websites in Could they could be named and shamed – and banned for under-18s – in the event that they fail to adjust to new on-line security guidelines.

Social media trade analyst Matt Navarra described the adjustments as vital – however stated they hinged on enforcement.

“As we have seen with teenagers all through historical past, in these kinds of eventualities, they’ll discover a approach across the blocks, if they’ll,” he informed the BBC.

“So I feel Instagram might want to be sure that safeguards cannot simply be bypassed by extra tech-savvy teenagers.”

Questions for Meta

Instagram is not at all the primary platform to introduce such instruments for folks – and it already claims to have greater than 50 instruments geared toward holding teenagers protected.

It launched a household centre and supervision instruments for folks in 2022 that allowed them to see the accounts their youngster follows and who follows them, amongst different options.

Snapchat additionally launched its circle of relatives centre letting dad and mom over the age of 25 see who their youngster is messaging and restrict their means to view sure content material.

In early September YouTube stated it would limit recommendations of certain health and fitness videos to teenagers, equivalent to these which “idealise” sure physique varieties.

Instagram already uses age verification technology to verify the age of teenagers who attempt to change their age to over 18, by means of a video selfie.

This raises the query of why regardless of the massive variety of protections on Instagram, younger individuals are nonetheless uncovered to dangerous content material.

An Ofcom examine earlier this year discovered that each single youngster it spoke to had seen violent materials on-line, with Instagram, WhatsApp and Snapchat being essentially the most often named providers they discovered it on.

Whereas they’re additionally among the many greatest, it’s a transparent indication of an issue that has not but been solved.

Beneath the Online Safety Act, platforms must present they’re dedicated to eradicating unlawful content material, together with youngster sexual abuse materials (CSAM) or content material that promotes suicide or self-harm.

However the guidelines are usually not anticipated to completely take impact till 2025.

In Australia, Prime Minister Anthony Albanese lately introduced plans to ban social media for youngsters by bringing in a brand new age restrict for teenagers to make use of platforms.

Instagram’s newest instruments put management extra firmly within the palms of fogeys, who will now take much more direct accountability for deciding whether or not to permit their youngster extra freedom on Instagram, and supervising their exercise and interactions.

They may after all additionally have to have their very own Instagram account.

However finally, dad and mom don’t run Instagram itself and can’t management the algorithms which push content material in the direction of their youngsters, or what’s shared by its billions of customers around the globe.

Social media professional Paolo Pescatore stated it was an “essential step in safeguarding youngsters’s entry to the world of social media and faux information.”

“The smartphone has opened as much as a world of disinformation, inappropriate content material fuelling a change in behaviour amongst youngsters,” he stated.

“Extra must be achieved to enhance youngsters’s digital wellbeing and it begins by giving management again to oldsters.”